Weekly Hallucinations: Opus 4.6 vs. GPT-5.3-Codex and the Super Bowl Ad War

Anthropic and OpenAI released their flagships Claude Opus 4.6 and GPT-5.3-Codex just half an hour apart. I never tire of being surprised that OpenAI publishes its releases in Russian as well. It was specifically the Codex version that was released, rather than the entire GPT-5.3 lineup all at once. Perhaps they were afraid of not keeping up with the competition.

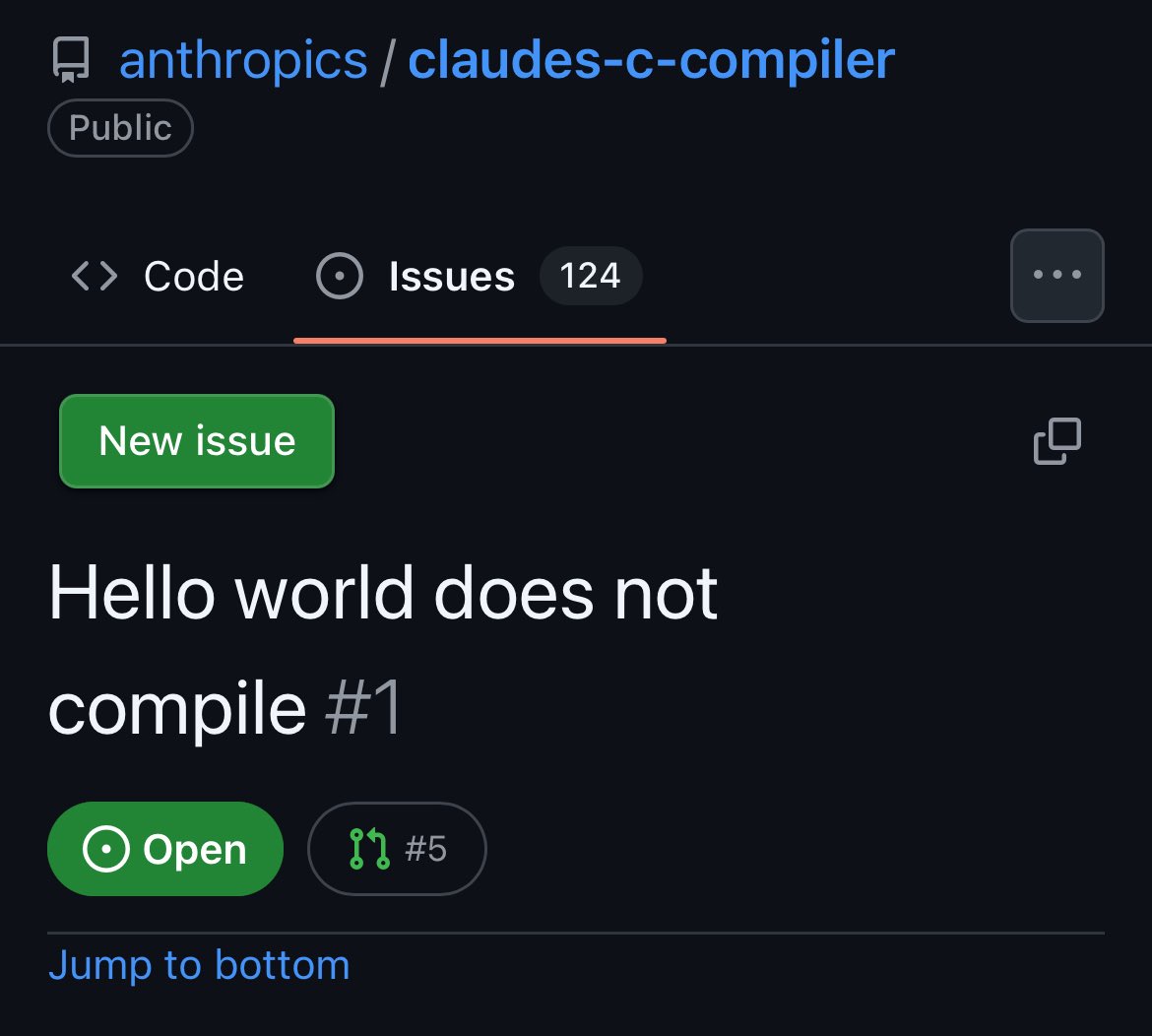

Opus 4.6 received a 1M context window (currently in beta), adaptive thinking, and a serious leap on ARC-AGI-2 (an abstract reasoning test): from 37.6% to 68.8%. A human solves it at about 95%. But the main story of the week is Anthropic's agentic teams, which built a C compiler from scratch without internet access. ~100K lines of code, boots Linux 6.9, compiles QEMU and FFmpeg. Admittedly, people are already writing in the issues that Hello World won't compile. Hahaha, classic… Separately, Opus found 500+ zero-day vulnerabilities in open-source projects, some of which are decades old. Anthropic also showcased Opus 4.6 Fast: 2.5 times faster, but 6 times more expensive. Safonov, pay up!

GPT-5.3-Codex is also impressive. The collaboration with NVIDIA GB200 reportedly delivered nearly a 3x inference speedup. Terminal-Bench 2: 77.3% vs. 65% for Opus. Early versions of 5.3 helped debug their own training pipeline. A million active users in the first week, and I'm one of them. It works truly faster than 5.2-Codex, though not quite 3 times faster.

Anthropic surprised everyone with a Super Bowl ad, where they mocked Sam Altman's recent statement about adding ads to ChatGPT (on free and go plans). There are no plans to add ads to Claude. Sam Altman didn't stay silent: claiming more Texans use ChatGPT for free than there are Claude users in the entire States. Pass the popcorn.

For self-hosters: LM Studio 0.4.1 added an Anthropic-compatible API. You can point Claude Code to local GGUF/MLX models by changing the base URL. Details in the blog.

And about Context Graphs. Jaya Gupta's concept is gaining momentum. The problem is real: an agent makes hundreds of decisions per session, tries approaches, and hits errors. All these traces are currently lost between sessions. Context Graphs propose storing them as a graph and reusing them. Cursor has already rolled out the first Agent Trace specification for coding agents, and several companies have backed it. Dharmesh Shah from HubSpot was skeptical: many promises, little concrete detail. I agree. But the problem exists, and whoever solves it first in practice will take the market.

By the way, exactly a year ago, Andrej Karpathy coined the term "vibe coding." This week, he suggested a new one: Agentic Engineering. If vibe coding is a jam session, then Agentic Engineering is an orchestra with a conductor and a score. But Karpathy himself noted that fully autonomous development is still far away. Agents chase percentage improvements with high hidden costs, skip validation, and ignore repository style. For now, the formula is: it's useful, but you have to keep an eye on the agents.

Anthropic and OpenAI are releasing flagships on the same day, like Formula 1 teams unveiling their new cars simultaneously. Except in Formula 1, you at least know who to root for (for the seven-time champion).