Weekly Hallucinations: Claude Code Source Leak, Gemma 4, Qwen 3.6-Plus, and the Tamagotchi They Weren't Supposed to Find

512 thousand lines of TypeScript code exposed to the public, Anthropic sent 8,100 DMCA notices over the keyword "claude-code" on GitHub, and Google finally released an open model with a normal license. You can draw your own conclusions on this channel.

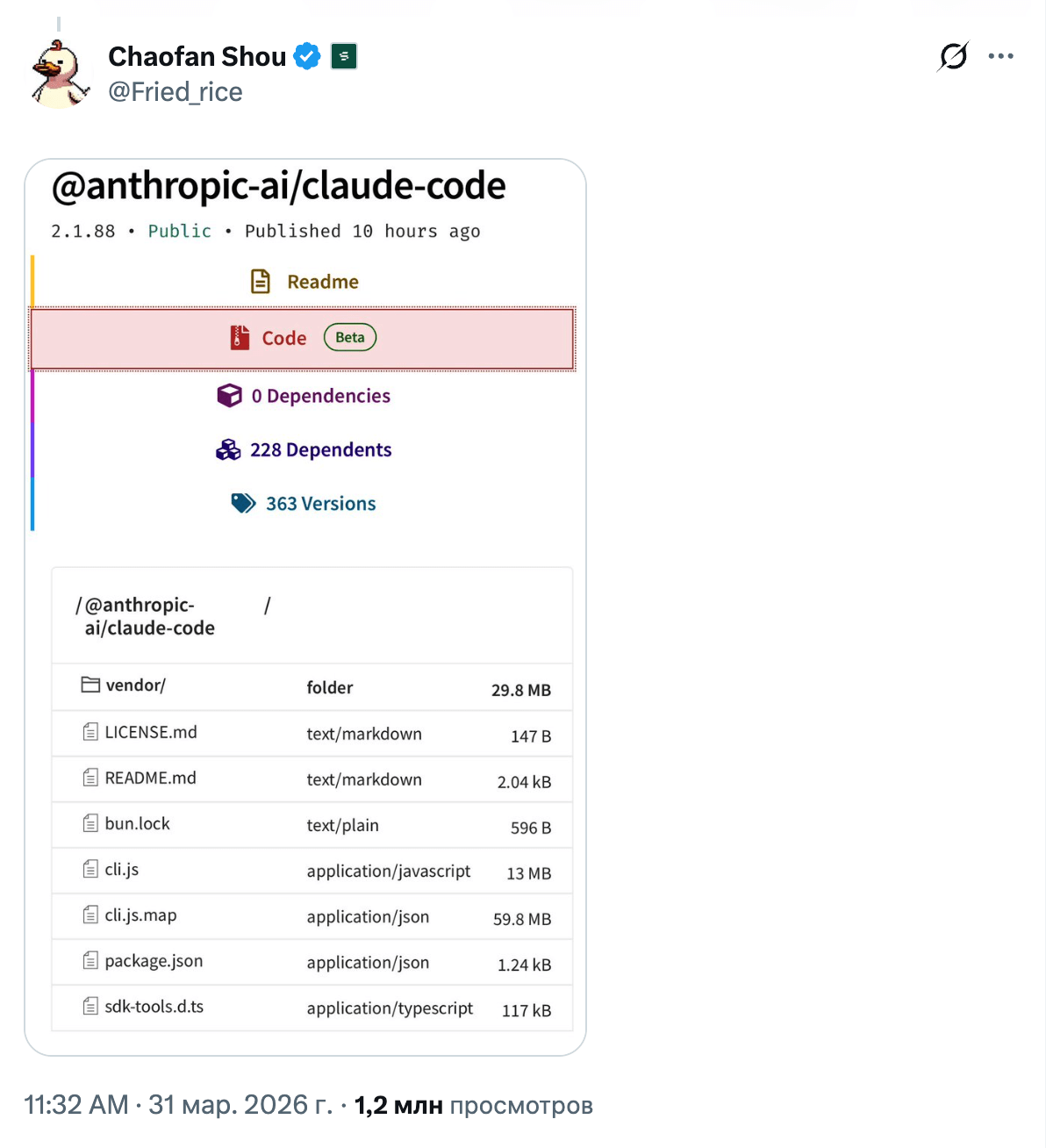

On March 31, 23-year-old Solayer Labs intern Chaofan Shao discovered that version 2.1.88 of the npm package @anthropic-ai/claude-code contained a 59 MB file named cli.js.map. A source map with the full source code. 512 thousand lines of TypeScript, 1,900 files. The post got 28 million views, and by morning the code was already on every other screen in AI Twitter.

Anthropic ended up in the kind of situation it would itself describe as a "catastrophic information leak" in one of its safety reports. Claude Code, one of the company’s most profitable products, landed on GitHub completely exposed. Boris Cherny from Anthropic confirmed: "human error", a manual deployment step, nobody was fired. The company responded with a DMCA campaign targeting 8,100 repositories, but quickly walked it back to one repo and 96 forks after a wave of criticism.

And then the collective code review began.

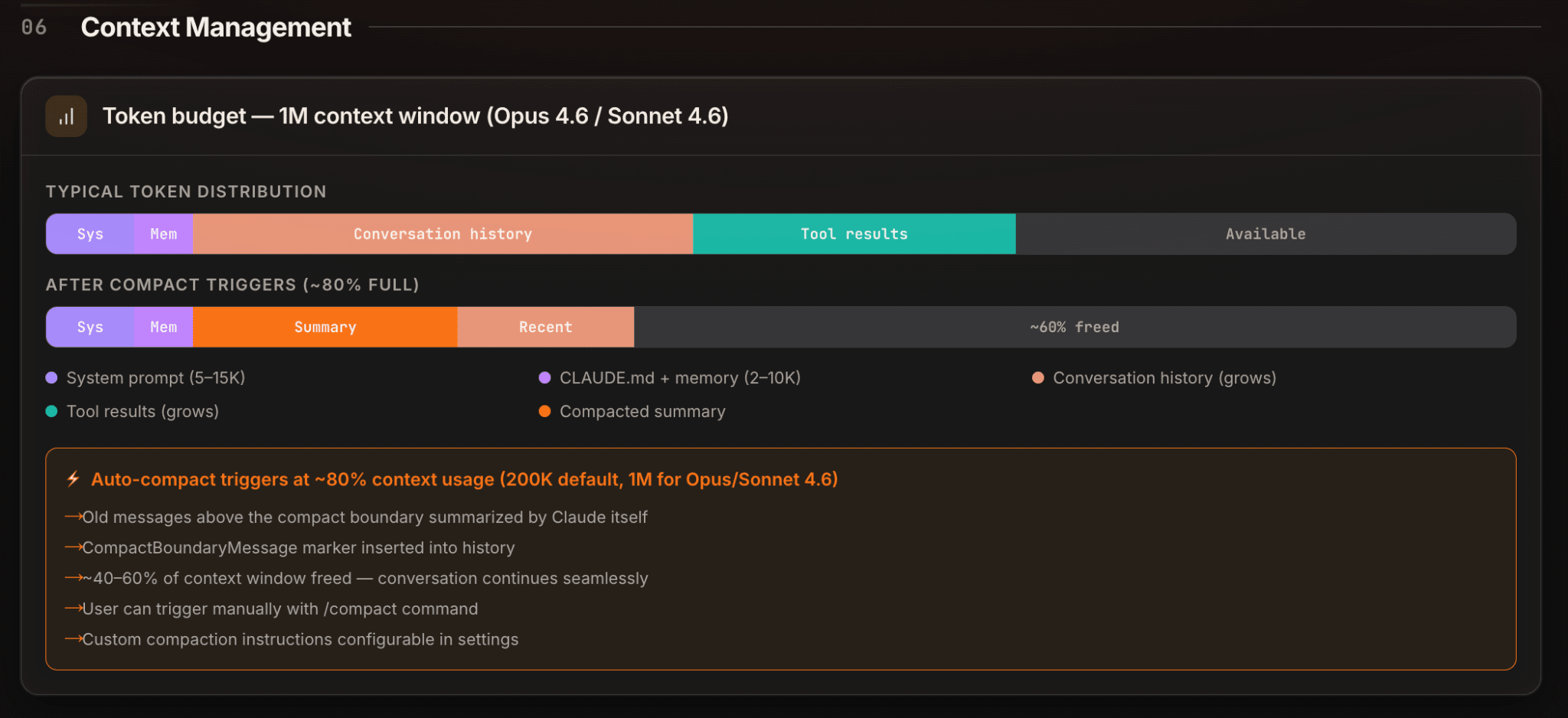

ccleaks.com broke down the architecture piece by piece: boot sequence, tool system, query loop. Here, they turned the leak into full documentation. Sebastian Raschka, ML book author, compiled a top-6 list of findings: repo state in context, aggressive caching, custom Grep/Glob/LSP, deduplication of read files, structured session memory, and subagents. A thread by ZhihuFrontier dug up a four-layer context compression system: HISTORY_SNIP, Microcompact, CONTEXT_COLLAPSE, and Autocompact. Plus 40+ tools, streaming with parallel tool calls, silent retries when responses were cut off, and a pile of feature flags.

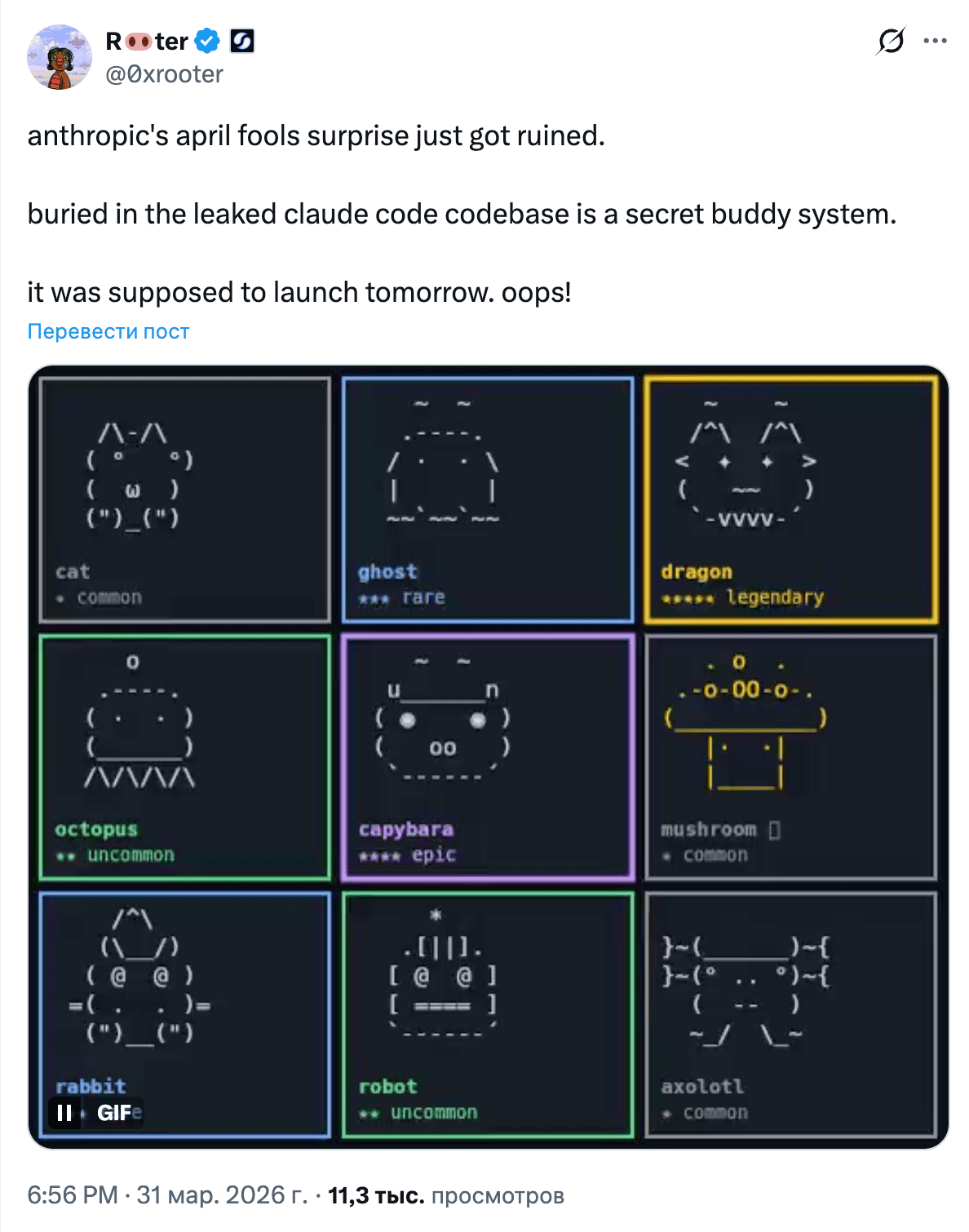

The hidden features were a separate source of amusement, of course. Kairos, an always-on background agent. Buddy, a terminal Tamagotchi with 18 variants and a rarity system (names hex-encoded to bypass internal scanners; here’s an interesting repo). Ultraplan and Ultrathink for extended reasoning. Dream for overnight memory consolidation. USER_TYPE=ant for Anthropic employees with expanded telemetry. And my favorite detail: a regex for "wtf" and "frustrating" to detect user dissatisfaction and adapt behavior.

The community assembled Claw Code: it started as a Python port of the leaked sources, and now they’re actively rewriting it from scratch in Rust — 9 crates, 48 thousand lines, and a mock parity harness for compatibility checks against the original. In a week, the repo gained 171K stars, making it one of the fastest-growing repositories in GitHub history. In parallel, open orchestrators like open-multi-agent appeared, along with a whole OpenClaude community wiring Claude Code tools into any model.

But the main takeaway from the leak isn’t Buddy or the regex. It’s that the competitive advantage of coding agents lies not in the model, but in the harness. As people pointed out on Twitter: "Beyond raw model capability, the real gap in coding tools is the harness." And the analysis of the codebase confirmed it: a huge chunk of the code is model-specific conditionals, error handling, diagnostics, and integrations. Pure engineering work, and a lot of it. Now that work is public, and every competitor will study it.

The cherry on top: that same day, malicious packages color-diff-napi and modifiers-napi appeared on npm, targeting anyone trying to compile the leaked code locally. A supply-chain attack layered on top of a leak — classic. Last week I wrote about an attack via LiteLLM, and here’s another reminder: in the agent world, every npm install can cost you your credentials.

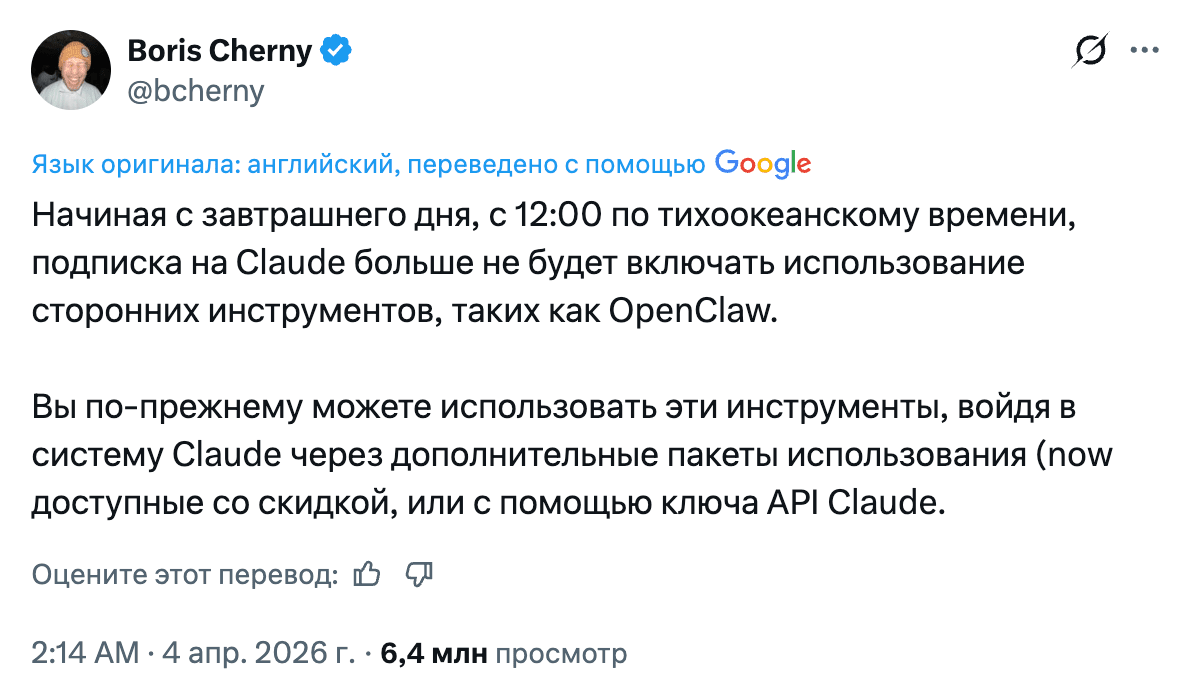

And on April 4, Anthropic capped the week with another decision. Boris Cherny announced that Claude subscriptions no longer cover usage through third-party harnesses like OpenClaw. OAuth tokens now work only in Claude Code and claude.ai. Want to use OpenClaw with Claude? Pay through extra usage or use an API key. Subscriptions "were not designed for third-party tool usage patterns," Boris explained.

OpenClaw creator Peter Steinberger (now at OpenAI) tried to negotiate with Anthropic together with Dave Morin. The most they got was a one-week delay. His comment sounded bitter: "They copy popular features into their closed harness, then block open source." Peter posted the command models auth login --provider anthropic --method cli --set-default as a workaround, but it seems it already no longer works.

As compensation, Anthropic gave every subscriber a one-time bonus equal to one month of their subscription (claimable until April 17 under the Usage tab), up to 30% off extra usage bundles, and the option of a full refund. DHH, creator of OpenCode, called the decision "openly hostile to customers." Developers who were used to running Opus 4.6 in OpenClaw for a fixed $200/month looked at API pricing and panicked: the same workloads could cost $1,000+. Migration panic followed: some ran to Codex (OpenAI immediately welcomed the refugees, and Saint Tibo dropped us higher limits), while others remembered the Chinese coding plans from Kimi, GLM, MiniMax, and Qwen. Running open-source models locally suddenly looked even more attractive.

Google DeepMind released Gemma 4 on April 2, and this time it’s concrete: Apache 2.0 (finally a normal license instead of the previous restrictions), four sizes (31B dense, 26B MoE with 4B active, E4B and E2B for mobile), text + images + audio, up to 256K context. On Arena, Gemma 4 31B ranked third among open models, and 26B A4B ranked sixth. GPQA Diamond hit 85.7% for the reasoning version. There’s even a native phone app.

What about performance? On an RTX 4090, the 26B A4B model delivers 162 tok/s with native 262K context and 19.5 GB of VRAM. On a Mac mini M4 with 16 GB, 34 tok/s. Georgi Gerganov, creator of llama.cpp, showed 300 t/s in a demo with built-in WebUI and MCP support (with the caveat that speculative decoding was involved). Day-0 support landed in llama.cpp, Ollama (0.20+), vLLM, LM Studio, and Transformers.js. The ecosystem was ready before the model was.

At the same time, on release day, a tokenizer bug surfaced in llama.cpp: the model was producing nonsense on simple tasks. Everything worked in Google AI Studio, but not locally. A classic day-zero story, and exactly why I keep saying: don’t trust first impressions, wait a couple of days and a few patches.

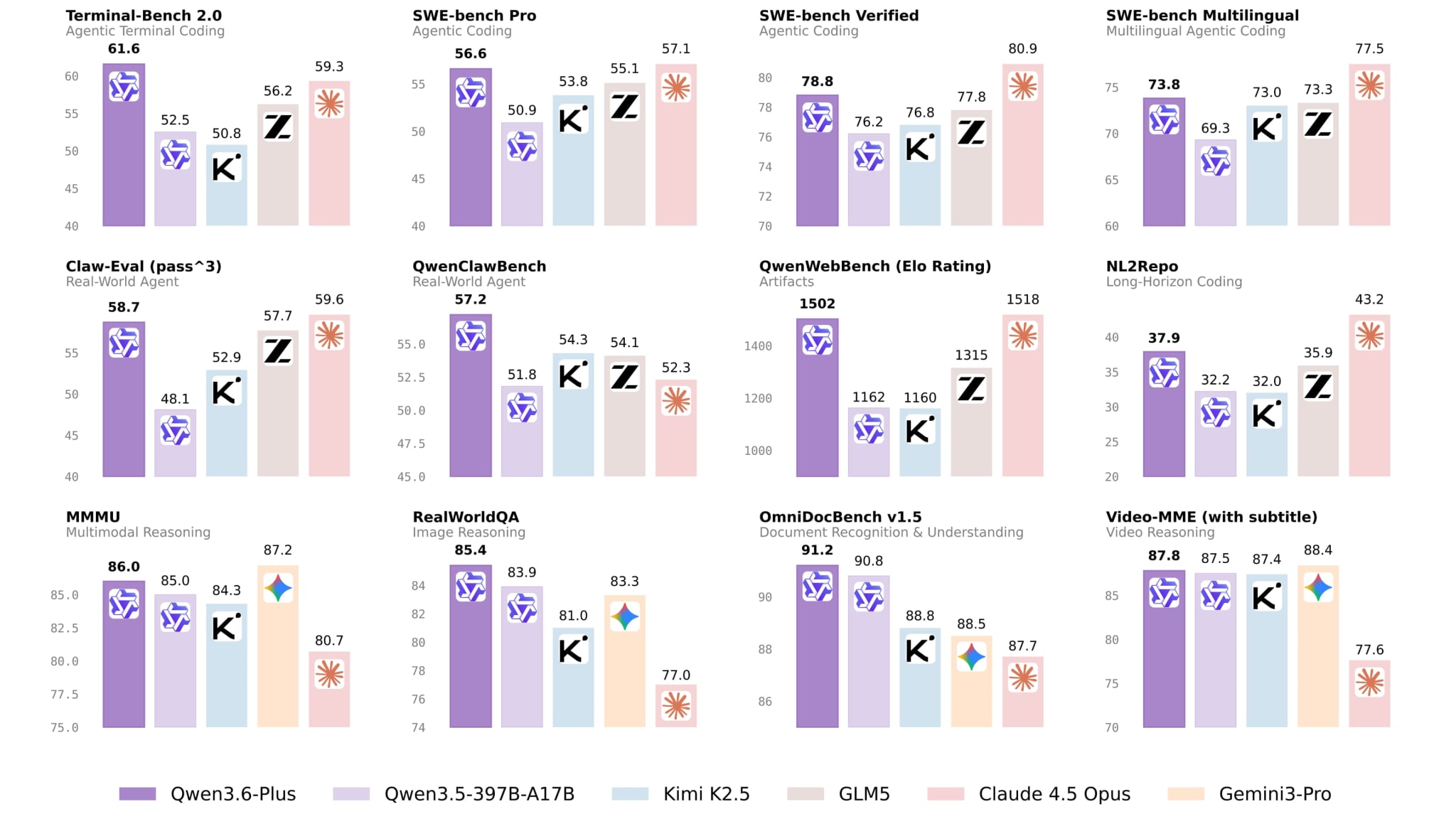

Alibaba introduced Qwen 3.6-Plus and claims it can compete with Western flagships: SWE-bench Verified 78.8 (Opus 4.6 has 80.9), GPQA Diamond 90.4, one million tokens of context. The focus is on native multimodal agents, with a promise of smaller open-source versions. For now, the model is available for free on OpenRouter from a single provider at an average speed of 40 tok/s. In a benchmark comparison, Qwen3.5-27B beats Gemma 4 31B almost everywhere except multilinguality. The community is testing 3.6-Plus on real tasks and notes that the model can sometimes be "lazy," giving short Gemini-style answers. But if you push it with a system prompt, it works. Also this week, Qwen3.5-Omni came out with audio-visual vibe coding: building websites by voice from video instructions. 10 hours of audio input, 113 recognition languages.

Nous Research shipped Hermes Agent v0.7.0, and this is a serious release: modular memory through a plugin system (Honcho, vector stores, custom databases), credential pools with automatic key rotation on 401 errors, Camofox for stealth browsing, inline diffs in the TUI. 168 PRs, 46 closed issues. Twitter is full of posts from people migrating from OpenClaw to Hermes and liking the results. I tried it myself and still haven’t figured out what makes Hermes fundamentally better than OpenClaw for my use cases. Maybe it’s the memory system: Hermes now has a modular one that works out of the box, while OpenClaw relies on MEMORY.md and /memory. If you actively use Hermes, tell me in the comments what sold you on it.

Cursor released version 3 on April 2. The main feature is Agents Window: parallel agents in worktrees, cloud, and SSH from one interface. Design Mode lets you annotate elements directly in the browser. The /best-of-n command runs one task across several models in parallel and compares results. Smaller IDE improvements include the Await tool for waiting on background processes, fast diffs for large files, and searching past chats through @-mentions.

The coding-agent race just got even hotter, and cross-agent composition is already real: the Codex plugin for Claude Code lets you run reviews through ChatGPT right inside the Anthropic stack.

The llama.cpp project crossed 100 thousand GitHub stars. Georgi Gerganov wrote that 2026 may be the year when useful automation stops requiring flagship cloud models. And the evidence keeps showing up every week.

Flash-MoE runs Qwen3.5-397B on a 48 GB MacBook Pro: pure C + Metal, weights streamed from SSD, only active experts kept in memory, about 5.5 GB RAM and 4.4 tok/s. Not a speed record, but 397 billion parameters on a laptop. Transformers.js v4 added a WebGPU backend for browsers with 200+ architectures. And Gemma 4 E4B runs on iPhone via Swift MLX. Local AI has stopped being an enthusiast hobby and become infrastructure.

If the competitive advantage of a coding agent is in the harness, and Claude Code is now public, what exactly is Anthropic selling for $200 a month? The model? That can be replaced with a Chinese one. The infrastructure? People are rewriting it in Rust. Maybe they should monetize Buddy instead: 18 variants, rarity system, loot boxes in the terminal. Labubu for developers, $50 for a legendary one. Pop Mart should be nervous.

Stay curious.